Gemma 4 vs Llama 4 vs Qwen 3.5: The 2026 Open Source Model Showdown

Gemma 4 vs Llama 4 vs Qwen 3.5: The 2026 Open Source Model Showdown

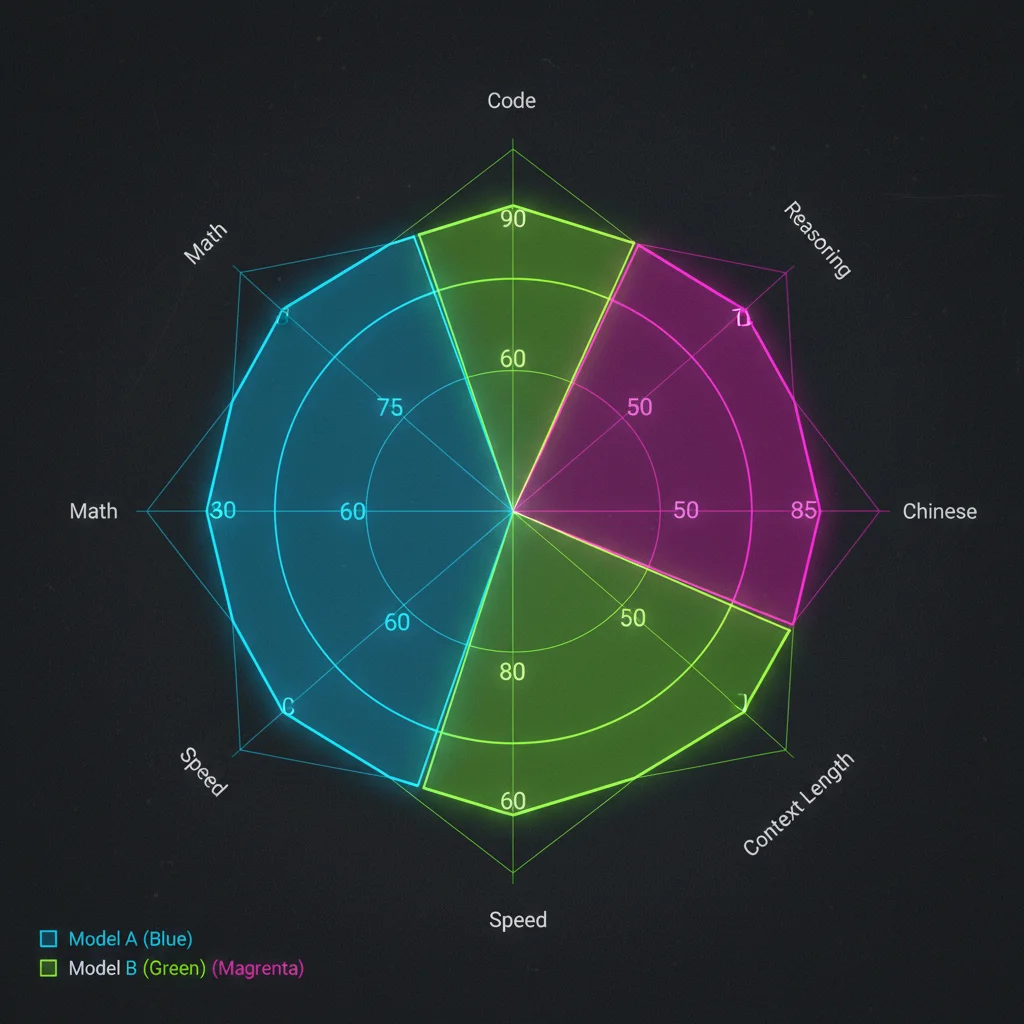

TL;DR: The three open-source model giants each win different battles in 2026. Gemma 4 leads in math reasoning (AIME 89.2%) and ships under Apache 2.0. Qwen 3.5 edges ahead on MMLU Pro (86.1%) and Chinese language tasks. Llama 4 owns the long-context market with its 10M token window, but the 700M MAU license restriction is a serious concern. The right model depends entirely on your use case.

You're evaluating open-source models. Three contenders sit in front of you: Google's Gemma 4, Meta's Llama 4, and Alibaba's Qwen 3.5. All three claim to be the best open-source model of 2026. All three have impressive benchmark numbers.

But you only need one.

This article won't tell you which one is "the best" — because that question is fundamentally wrong. I'll use actual benchmark data, inference speed tests, licensing analysis, and Chinese language comparisons to help you find the one that fits your specific scenario. If you're not yet familiar with Gemma 4's overall landscape, start with the Gemma 4 Complete Guide.

Evaluating which open-source model fits your product best? Book a free AI selection consultation and let us recommend based on your actual requirements.

The 2026 Open Source Battlefield: Three Giants, Three Strategies

Each company has a fundamentally different motivation for open-sourcing models, and that directly shapes their design priorities.

Google (Gemma 4) plays the "ecosystem gateway" strategy. By releasing Gemma 4 under Apache 2.0 with zero restrictions, Google aims to get developers building on Gemma, eventually funneling them into Google Cloud and Vertex AI. The full size range from 2.3B to 31B ensures Gemma has a presence everywhere — from edge devices to cloud servers.

Meta (Llama 4) plays "platform defense." Meta needs to ensure AI technology isn't monopolized by a few companies, so they open-source their models. But the Llama Community License retains a 700M MAU cap — in plain English: you can use it for free, just don't use it to compete with us. Llama 4 ranges from Scout (109B) to Maverick (400B), targeting the large-model segment.

Alibaba (Qwen 3.5) plays "global expansion." Qwen has a natural advantage in Chinese language capabilities, and with Apache 2.0 licensing plus native multimodal support (including video and audio input), it aims to become the default choice across Asian markets. The range from 0.8B to 397B MoE is the widest of the three.

Understanding these strategic differences matters. When you choose a model, you're also choosing which ecosystem to join.

Specs Comparison: Parameters, Context Window, and Licensing

Let's start with hard specs. Numbers don't lie.

Full Comparison Table

| Spec | Gemma 4 31B | Llama 4 Scout | Qwen 3.5 27B |

|---|---|---|---|

| Total Parameters | 31B (Dense) | 109B (MoE) | 27B (Dense) |

| Active Parameters | 31B | 17B | 27B |

| Context Window | 256K tokens | 10M tokens | 128K tokens |

| License | Apache 2.0 | Llama Community License | Apache 2.0 |

| Multimodal | Text + Image | Text + Image | Text + Image + Video + Audio |

| MoE Variant | 26B A4B (3.8B active) | Scout is MoE | 35B-A3B (3B active) |

| Release Date | April 2026 | April 2025 | February 2026 |

Several things worth highlighting:

The context window gap is massive. Llama 4 Scout's 10M token context window is 40-80x larger than the other two. If your application needs to process extremely long documents — entire books, large codebases — Llama 4 is in a league of its own on this dimension.

The active parameter trap. Llama 4 Scout has 109B total parameters but only activates 17B per forward pass. This means you need to load 109B worth of model weights into memory while only using 17B of compute. VRAM requirements are much higher than you might expect.

Multimodal capability divergence. Qwen 3.5 is currently the only open-source model family with native video and audio input support. If your application involves video understanding or speech processing, Qwen 3.5 is a turnkey solution — the other two require additional integration work.

For a deep dive into Gemma 4's architecture design (MoE, Dual RoPE, and how the 256K context is achieved), see Gemma 4 Architecture Deep Dive.

Benchmark Showdown: Who Wins at What

Benchmarks aren't everything, but they're the most objective starting point. The following data comes from official technical reports and independent evaluation platforms.

Core Benchmark Comparison

| Benchmark | Gemma 4 31B | Llama 4 Scout | Qwen 3.5 27B | What It Measures |

|---|---|---|---|---|

| MMLU Pro | 85.2% | 74.3% | 86.1% | General knowledge & reasoning |

| AIME 2025 | 89.2% | 36.0% | 48.7% | Math competition reasoning |

| GPQA Diamond | 84.3% | 65.2% | 85.5% | Graduate-level science reasoning |

| LiveCodeBench v6 | 80.0% | 53.4% | 72.4% | Code generation |

| Codeforces ELO | 2150 | — | 1850 | Competitive programming |

| Arena AI Ranking | #3 (1441) | #12 | #6 | Human preference voting |

Reading Between the Numbers

Gemma 4 crushes math and code. AIME 89.2% vs Llama 4's 36.0% — this isn't "slightly better," it's a completely different tier. If your application's core is math reasoning or code generation, Gemma 4 is the clear winner.

Qwen 3.5 edges ahead on general reasoning. MMLU Pro 86.1% and GPQA Diamond 85.5%, both roughly 1 percentage point above Gemma 4. For chatbot scenarios requiring broad knowledge coverage, Qwen 3.5 may deliver more consistent results.

Llama 4 Scout trails across the board on benchmarks. As a model released in April 2025, comparing it against early 2026 competitors is admittedly somewhat unfair. But if you're making a decision today, numbers are numbers. Llama 4's advantage isn't in benchmarks — it's in context window.

My personal observation: there's a gap between benchmark scores and real-world experience. The Arena AI human preference ranking (Gemma 4 at #3, Qwen 3.5 at #6) may reflect actual performance better than any single benchmark.

Inference Speed: Who Runs Fastest on the Same Hardware

For developers, inference speed directly impacts user experience and server costs.

RTX 4090 (24 GB VRAM) Benchmarks

| Model | Quantization | tok/s (Generation) | Can Load? | Notes |

|---|---|---|---|---|

| Qwen 3.5 27B | Q4_K_M | ~35 | Yes | Fastest |

| Gemma 4 31B | Q4_K_M | ~25 | Barely (near capacity) | Highest quality |

| Gemma 4 26B MoE | Q4_K_M | ~11 | Yes | MoE routing overhead |

| Llama 4 Scout | — | Cannot load | No | 109B needs multi-GPU |

H100 (80 GB VRAM) Benchmarks

| Model | Precision | tok/s (Generation) | Notes |

|---|---|---|---|

| Qwen 3.5 27B | FP16 | ~95 | Enterprise-grade speed |

| Gemma 4 31B | FP16 | ~75 | Good quality/speed balance |

| Gemma 4 26B MoE | FP16 | ~60 | Low active params but routing overhead |

| Llama 4 Scout | FP8 | ~45 | Barely fits on single card |

Key findings:

Qwen 3.5 27B is the speed king. Whether on consumer or enterprise hardware, Qwen 3.5 27B is the fastest. Its 27B parameter count with well-optimized architecture delivers 35 tok/s on RTX 4090 — smooth enough for interactive applications.

Gemma 4 MoE speed is surprisingly slow. In theory, activating only 3.8B parameters should be blazing fast. In practice, MoE routing overhead plus the need to load all 25.2B weights into VRAM makes it slower than the Dense variant. This is a known issue in the vLLM community, with future optimizations expected.

Llama 4 Scout doesn't fit on a single consumer GPU. With 109B total parameters, an RTX 4090 simply can't hold it. Even on an H100, you need FP8 quantization to barely fit. If your budget only covers consumer-grade GPUs, Llama 4 is automatically out.

For detailed Gemma 4 performance across various hardware configurations, check our Gemma 4 Hardware Requirements Guide.

Need to maximize inference performance on a limited budget? Contact our AI infrastructure team for customized hardware configuration advice.

License Comparison: Apache 2.0 vs Llama Community License

This is the part many engineers overlook — yet it could put your product at legal risk after launch.

Three-Way License Comparison

| Term | Gemma 4 (Apache 2.0) | Llama 4 (Community License) | Qwen 3.5 (Apache 2.0) |

|---|---|---|---|

| Commercial Use | Unrestricted | Conditional | Unrestricted |

| MAU Limit | None | Must apply above 700M MAU | None |

| Branding Requirement | None | Must display "Built with Llama" | None |

| Modification / Fine-tuning | Unrestricted | Allowed but bound by terms | Unrestricted |

| Redistribution | Unrestricted | Must include original license | Unrestricted |

| Use for Training Other Models | Allowed | Prohibited for non-Llama models | Allowed |

| Acceptable Use Policy | None | Yes (Meta-defined) | None |

Why Licensing Matters More Than Benchmarks

The real impact of the 700M MAU limit. You might think 700 million monthly actives is far from your reality. But note: the Llama License calculates MAU across your entire corporate group, not just the product using Llama. If all your company's products collectively exceed 700M MAU, you need to negotiate a license with Meta — and Meta can decide at its "sole discretion" whether to grant it.

The "Built with Llama" branding requirement. If you build a product with Llama 4, you must prominently display the branding on websites, apps, and documentation. For white-label solutions or B2B products, this can be a dealbreaker.

The training restriction you'll miss. You cannot use Llama 4's outputs to train, fine-tune, or improve models outside the Llama family. For teams doing model distillation, this is a fatal limitation.

Apache 2.0's advantage is obvious. Both Gemma 4 and Qwen 3.5 use Apache 2.0, meaning you can do anything — commercial use, modification, redistribution, training other models — with zero restrictions. For startups and enterprises alike, this eliminates all legal uncertainty.

My recommendation: unless you have a very clear reason (like needing the 10M token context), default to Apache 2.0 licensed models. The legal risk isn't worth it.

Chinese Language Comparison: Who Handles Traditional and Simplified Chinese Best

For developers building products for Chinese-speaking markets, Chinese language capability is a critical selection factor.

Chinese Capability Ranking

Based on community testing and public benchmarks:

#1: Qwen 3.5 — The undisputed champion. Alibaba's Qwen series has a natural advantage in Chinese training data quality and volume. Both Traditional and Simplified Chinese performance is excellent, with idiom comprehension, classical Chinese, and Taiwanese usage understanding clearly superior to the other two.

#2: Gemma 4 — Massive improvement. Google significantly increased Chinese data in Gemma 4's training corpus. Traditional Chinese performance is much better than Gemma 3, though it still falls short of Qwen on complex Chinese contexts (wordplay, cultural references).

#3: Llama 4 — Usable but with gaps. Meta's Chinese training data is comparatively lacking. In specialized Chinese scenarios (legal documents, medical reports), noticeable errors occasionally appear. Traditional Chinese support is particularly weaker than the other two.

Practical Chinese Test Comparison

| Test Category | Gemma 4 31B | Llama 4 Scout | Qwen 3.5 27B |

|---|---|---|---|

| Traditional Chinese Summarization | Good | Average | Excellent |

| Simplified Chinese Technical Translation | Good | Good | Excellent |

| Taiwanese Colloquial Understanding | Average | Poor | Good |

| Classical Chinese Translation | Average | Poor | Good |

| Chinese Code Comments | Good | Average | Good |

| Chinese RAG Q&A | Good | Average | Excellent |

If your product primarily serves Chinese-speaking users, Qwen 3.5 is the safest choice. But if Chinese is an add-on requirement rather than a core feature, Gemma 4's Chinese capability is already solid enough.

Need to choose the right model for your Chinese-language AI product? Book a free consultation — we have extensive experience deploying Chinese-optimized models.

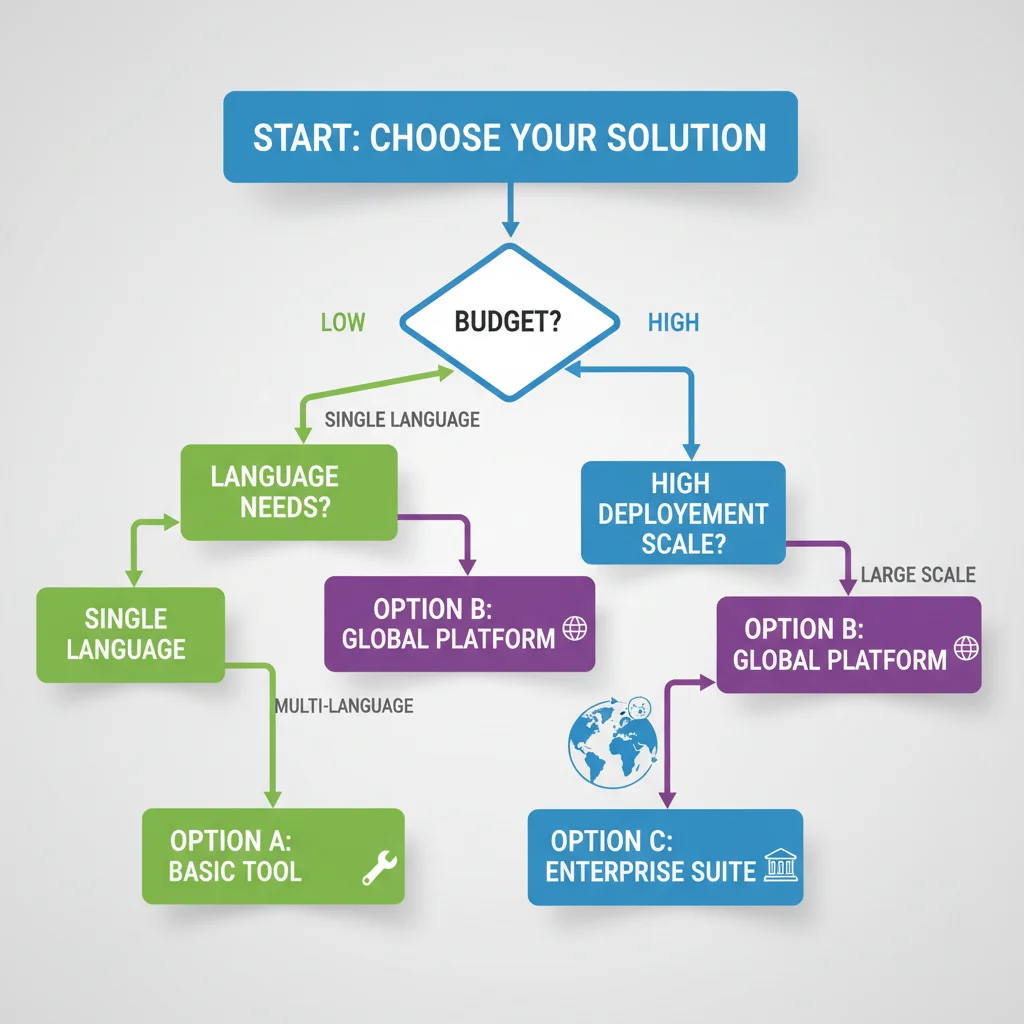

Selection Decision Guide: Which Model for Which Scenario

Theory done. Time to decide. Here are specific recommendations based on different scenarios.

Decision Tree: 5 Questions to Pick Your Model

Question 1: Does your application need to process extremely long text (> 256K tokens)?

- Yes → Llama 4 Scout (10M token context window is irreplaceable)

- No → Continue

Question 2: Is Chinese language capability a core requirement?

- Yes → Qwen 3.5 27B (strongest Chinese, Apache 2.0 worry-free)

- No → Continue

Question 3: Is your hardware budget limited to consumer GPUs (RTX 4090 or below)?

- Yes → Llama 4 Scout is eliminated. Choose Gemma 4 31B or Qwen 3.5 27B

- No → Continue

Question 4: Is your application primarily focused on math reasoning or code generation?

- Yes → Gemma 4 31B (AIME 89.2%, LiveCodeBench 80.0%)

- No → Continue

Question 5: Do you need maximum inference throughput?

- Yes → Qwen 3.5 27B (35 tok/s on RTX 4090, fastest)

- No → Gemma 4 31B (most balanced overall, Apache 2.0 license)

Scenario Recommendation Summary

| Scenario | Recommended Model | Reason |

|---|---|---|

| Chinese Customer Service Chatbot | Qwen 3.5 27B | Best Chinese + fast inference |

| Code Assistant | Gemma 4 31B | Leading LiveCodeBench and Codeforces |

| Legal Document Analysis (Long Text) | Llama 4 Scout | 10M context window |

| Edge Device Deployment | Gemma 4 E2B (2.3B) | Smallest size, Apache 2.0 |

| Multimodal Apps (with Video) | Qwen 3.5 | Only native video input support |

| Startup MVP | Gemma 4 31B | Best quality/speed/license balance |

| Model Distillation / Training | Gemma 4 or Qwen 3.5 | Apache 2.0, free to use for training |

| Enterprise-Scale Deployment | Depends on scenario | See Enterprise Deployment Guide |

Want to learn how to actually deploy Gemma 4 locally? Check our Gemma 4 Local Deployment Tutorial. For a broader API comparison, see Gemini vs OpenAI API Comparison.

Team working on model selection and want more tailored advice? Book an AI technical consultation — we can recommend based on your specific requirements, budget, and tech stack.

Frequently Asked Questions (FAQ)

Both Gemma 4 and Qwen 3.5 are Apache 2.0 — what else differentiates them besides benchmarks?

The biggest difference lies in ecosystem and toolchain integration. Gemma 4 has the deepest integration with Google Cloud, Vertex AI, and Android/Chrome. Qwen 3.5 has the best support within the Alibaba Cloud ecosystem and is the only open-source model with native video and audio input. Additionally, Gemma 4's smallest model (E2B at 2.3B) delivers better quality for edge deployment than Qwen 3.5's smallest (0.8B), though Qwen's 0.8B is physically smaller.

Will Llama 4's 700M MAU limit actually affect me?

If you're a startup or SME, probably not in the short term. But watch out for two things: (1) MAU calculations include all products across your entire corporate group, not just the one using Llama; (2) if your product gets acquired by a larger company, the acquirer's MAU counts too. Choosing an Apache 2.0 model eliminates this risk entirely.

Can I use all three models together?

Yes, and many teams do exactly this. For example: use Gemma 4 for math and code tasks, Qwen 3.5 for Chinese-language tasks, and Llama 4 for tasks requiring ultra-long context. But note that Llama 4's license prohibits using its outputs to train non-Llama models, so be careful about data flow when mixing models.

How will these models evolve in the second half of 2026?

Google has hinted that Gemma 4 Ultra (a larger Dense model) may arrive in Q3. Meta's Llama 5 is expected before year-end. Alibaba's Qwen 4 timeline is unclear, though based on their release cadence, it could land in Q4 2026. My advice: don't bet on future releases — choose what works best for you right now.

Have more technical questions? Contact our AI team directly for free expert advice.

Need Professional Cloud Advice?

Whether you're evaluating cloud platforms, optimizing existing architecture, or looking for cost-saving solutions, we can help

Book Free ConsultationRelated Articles

Gemma 4 Enterprise Adoption Guide: Selection Strategy, Cost Analysis, and Deployment

How should enterprises adopt Gemma 4 in 2026? Complete guide covering model selection for use cases, cloud vs on-premise cost analysis (Vertex AI vs self-hosted GPU), deployment architecture, data security compliance, and a 4-phase rollout roadmap from PoC to production.

AI Dev ToolsGemma 4 Fine-Tuning Guide: LoRA/QLoRA on Consumer GPUs

Complete 2026 Gemma 4 fine-tuning tutorial: LoRA vs QLoRA vs full fine-tuning comparison, Unsloth setup, data preparation, step-by-step training workflow, evaluation methods, and model deployment. A single RTX 4090 is all you need.

AI Dev ToolsGemma 4 API Tutorial: Vertex AI and Google AI Studio Integration Guide

Complete 2026 Gemma 4 API integration tutorial: Google AI Studio for free quick start vs Vertex AI for enterprise deployment. Includes Python code examples, multimodal input, Function Calling, system prompts, and API pricing optimization.