Gemma 4 Enterprise Adoption Guide: Selection Strategy, Cost Analysis, and Deployment

Gemma 4 Enterprise Adoption Guide: Selection Strategy, Cost Analysis, and Deployment

TL;DR: Gemma 4 ships under Apache 2.0 — full commercial freedom, no MAU limits, no revenue caps. Four models cover every enterprise use case: E4B for customer service, 26B MoE for document processing, 31B Dense for R&D, E2B for edge devices. Vertex AI pay-per-use works best for validation; self-hosted GPU becomes cheaper above 10M tokens/day. Plan for a 4-phase rollout from PoC to production, typically 3-6 months total.

Your engineering team spent three months evaluating LLM options. The report lands on your desk: "We recommend adopting an open-source model, but we're not sure which one."

This scenario plays out daily across enterprises in 2026.

The problem isn't a lack of choices — Gemma 4, Llama 4, and Qwen 3.5 are all excellent open-source models. The problem is figuring out how to choose and how to deploy. Is the license permissive enough? What does the cost structure look like? How do we handle data security? These are the questions keeping tech decision-makers up at night.

Evaluating enterprise AI adoption? Book a free AI consultation and let our team provide a complete technical assessment and cost analysis.

This guide takes a pragmatic approach, walking you through model selection, cost analysis, architecture design, compliance, and a production rollout roadmap. For a comprehensive overview of Gemma 4's capabilities, see the Gemma 4 Complete Guide.

Why Enterprises Should Pay Attention to Gemma 4

Let's address the fundamental question first: with so many LLMs available, why should enterprises specifically focus on Gemma 4?

Three words: Apache 2.0.

Gemma 4 is the first model family from Google DeepMind released under the Apache 2.0 license. This isn't just a licensing change — it's a complete commercial liberation. Specifically, Apache 2.0 gives enterprises three critical freedoms:

Unrestricted commercial use. Unlike some "open-source" models that come with MAU (monthly active user) limits or revenue thresholds, Apache 2.0 has zero commercial restrictions. You can embed Gemma 4 in a product you sell, build a SaaS service around it, deploy it on client premises — all completely legal, no additional licensing fees required.

Complete data sovereignty. Self-hosted deployment means all data stays under your control. Customer data never touches Google's servers or any third-party infrastructure. For highly regulated industries like finance, healthcare, and government, this is the primary reason to choose open-source models.

Zero vendor lock-in. Apache 2.0 lets you modify the model architecture, fine-tune parameters, and even redistribute modified versions. If you decide to switch inference frameworks or deployment platforms, you don't need anyone's permission.

Here's how the licensing compares across popular open-source models:

| Model | License | Commercial Restrictions | MAU Limits |

|---|---|---|---|

| Gemma 4 | Apache 2.0 | None | None |

| Llama 4 | Llama Community License | Revenue > $700M requires separate agreement | Yes (700M MAU) |

| Qwen 3.5 | Apache 2.0 | None | None |

Gemma 4 and Qwen 3.5 are tied on licensing, but Gemma 4 leads across benchmarks — 89.2% on AIME 2026 math reasoning, 85.2% on MMLU Pro, both the highest scores among open-source models. For the full comparison, see Gemma 4 vs Llama 4 vs Qwen 3.5 Complete Showdown.

Enterprise Use Cases for Each Model Variant

Gemma 4 isn't a single model — it's a model family. Choosing the wrong variant doesn't just waste resources; it degrades user experience. Here's the optimal match for each scenario:

E4B (4.3B Parameters): Customer Service and Real-Time Interaction

E4B is the enterprise deployment sweet spot. At 4.3B parameters, it runs on laptop-grade hardware with fast inference — perfect for scenarios demanding instant responses.

Best for:

- Intelligent customer service: sub-200ms response time, multi-turn conversations

- Internal knowledge base queries: paired with RAG for fast document retrieval

- Real-time translation and summarization: handling multilingual customer communications

- Chat bots on messaging platforms: low latency, high concurrency

Hardware: ~3GB VRAM at Q4 quantization. A single RTX 3060 handles it.

26B MoE (25.2B Parameters, 3.8B Active): Document Processing and Data Analysis

The 26B MoE is the performance-per-dollar champion. Its MoE architecture activates only 3.8B parameters per inference, keeping costs close to E4B while delivering near-31B capability.

Best for:

- Contract review and key clause extraction: 256K context window handles entire contracts

- Financial report analysis: extracting structured data from PDFs

- Technical document classification and tagging

- Multi-document cross-referencing: analyzing differences across multiple documents

Hardware: ~16GB VRAM at Q4 quantization. RTX 4090 or RTX 5060 Ti works well.

31B Dense (31B Parameters): R&D and Complex Reasoning

The flagship 31B Dense variant uses all parameters during inference — irreplaceable when deep reasoning is required.

Best for:

- Code generation and review: 80.0% on LiveCodeBench, approaching commercial API levels

- Mathematical modeling and scientific computation: 89.2% on AIME

- Complex decision support systems: multi-step reasoning, causal analysis

- AI-assisted R&D: scenarios demanding the highest output quality

Hardware: ~18GB VRAM at Q4 quantization. RTX 4090/5090 or H100 recommended. For detailed configurations, see the Gemma 4 Hardware Requirements Guide.

E2B (2.3B Parameters): Edge Devices and IoT

E2B is small enough to run on a smartphone and supports native audio input — the go-to choice for edge scenarios.

Best for:

- Factory floor real-time monitoring: paired with cameras for visual inspection

- Retail store AI assistants: running on POS terminals or tablets

- In-vehicle voice assistants: native audio support, works offline

- IoT anomaly detection

Hardware: Just 1.5GB at Q4 quantization. Mid-range Android phones can handle it.

Not sure which model fits your business? Talk to our AI consulting team for a free use case analysis and model recommendation.

Cloud vs On-Premise Deployment: Cost Analysis

This is the question every tech lead wants answered: is Vertex AI or a self-hosted GPU server more cost-effective?

The answer depends on your daily throughput. Here's a cost analysis using 26B MoE as the baseline:

Option A: Vertex AI (Cloud API)

Gemma 4 31B on Vertex AI is priced at $0.14 per million input tokens and $0.40 per million output tokens. The 26B MoE is even cheaper.

| Daily Throughput | Est. Monthly Cost | Notes |

|---|---|---|

| 1M tokens/day | ~$5-8/month | Suitable for PoC |

| 5M tokens/day | ~$25-40/month | Small-scale production |

| 10M tokens/day | ~$50-80/month | Medium scale |

| 50M tokens/day | ~$250-400/month | Consider self-hosting |

| 100M tokens/day | ~$500-800/month | Self-hosting is cheaper |

Pros: Zero upfront investment, elastic scaling, no ops burden, SLA guarantees Cons: Higher long-term costs, data passes through third party, higher latency, potential hidden costs (logging, networking, provisioned throughput adds 1.5-2.5x)

Option B: Self-Hosted GPU Server

Using a workstation with an RTX 5090 (32GB VRAM) as an example:

| Item | Cost |

|---|---|

| RTX 5090 | ~$2,000 |

| Workstation (CPU, RAM, PSU, chassis) | ~$2,500 |

| Storage and networking | ~$400 |

| Hardware Total | ~$4,900 |

| Electricity (monthly, 24/7 operation) | ~$50-80 |

| Ops labor (allocated share) | ~$200-500/month |

Average monthly cost (hardware amortized over 3 years): ~$390-720/month (including electricity and ops)

Pros: Predictable long-term costs, data never leaves premises, lowest latency, full autonomy Cons: High upfront investment, requires DevOps staff, slow to scale, hardware risk on you

Break-Even Point

Based on these estimates, the break-even point falls around 10-20 million tokens per day — where self-hosted GPU monthly costs start undercutting Vertex AI. But this number shifts based on your specific requirements. If you need high availability (multiple servers) or provisioned throughput SLAs, the break-even point moves higher.

My recommendation: Use Vertex AI for PoC and initial validation, then evaluate self-hosting once you've confirmed product-market fit. This lets you validate business value quickly without prematurely committing to hardware costs.

Want to get started with Vertex AI? See the Gemma 4 API Integration Tutorial.

Enterprise Deployment Architecture

Once you've decided on a model and deployment method, the next step is architecture design. Here's our recommended enterprise-grade deployment architecture:

Recommended: API Gateway + Model Service + Cache Layer

User Request

↓

[API Gateway / Load Balancer]

↓

[Auth & Rate Limiting Layer]

↓

[Routing Layer — selects model by task type]

├─ Simple queries → E4B (low latency)

├─ Document analysis → 26B MoE (high quality)

└─ Complex reasoning → 31B Dense (maximum capability)

↓

[Inference Engine — vLLM / TGI / Ollama]

↓

[Response Cache + Logging]

↓

Return to User

Key Design Principles

Smart routing. Not every request needs the largest model. A "What are your business hours?" query works fine with E4B — save the 31B for tasks that genuinely require deep reasoning. Smart routing typically cuts inference costs by 60-70%.

Caching strategy. For high-repetition queries (FAQs, product specs), use Redis for response caching. Hit rates of 30-40% are common, directly reducing inference costs by that proportion.

High availability. Production environments should run at least two inference nodes with health checks and automatic failover. On Kubernetes (GKE or self-managed), configure HPA (Horizontal Pod Autoscaler) to scale based on GPU utilization.

Observability. Log every inference request: input token count, output token count, latency, model version. This data is the foundation for optimization and cost control.

Inference Engine Selection

| Engine | Strengths | Best For |

|---|---|---|

| vLLM | High throughput, PagedAttention, continuous batching | High-concurrency production |

| TGI (Text Generation Inference) | Official Hugging Face, easy integration | HF ecosystem workflows |

| Ollama | One-click install, developer-friendly | Development, small-scale deployment |

| llama.cpp | Ultra-low resource usage, runs on CPU | Edge devices, embedded systems |

For production, I recommend vLLM. Its PagedAttention technology improves GPU memory utilization by 2-4x, with clear advantages under high concurrency.

Data Security and Compliance

For regulated industries — finance, healthcare, government — data security isn't "nice to have." It's mandatory. Gemma 4's open-source nature provides inherent compliance advantages, but there are important considerations.

GDPR Compliance

The EU's EDPB Opinion 28/2024 and CNIL's 2026 guidelines explicitly state that AI models trained on personal data fall under GDPR "in most cases." However, since Gemma 4 is a pre-trained model, the enterprise compliance focus shifts to deployment-time concerns:

- Data residency: Self-hosted deployment ensures all inference data stays on your servers, never passing through third parties

- Input data minimization: Send only necessary information to the model; implement PII detection and masking

- Output auditing: Build automated checks ensuring model responses don't contain sensitive information

- Data retention policies: Define clear retention periods and deletion procedures for inference logs

Industry-Specific Regulations

Beyond GDPR, industries face additional requirements. Financial services must comply with SOC 2 and local banking regulations. Healthcare deployments need to address HIPAA (in the US) or equivalent local health data laws. Government use cases often require data sovereignty — ensuring data never leaves national borders.

The good news: self-hosted Gemma 4 inherently satisfies data sovereignty requirements because you control exactly where the data lives and flows.

Model Output Auditing

Even the best models hallucinate. Enterprise deployments must include output auditing mechanisms:

- Content filters: Screen for inappropriate, biased, or factually incorrect outputs

- Citation verification: For factual claims, require the model to provide sources and verify them

- Human review workflows: High-stakes decisions (medical advice, legal opinions) must go through human confirmation

- Audit trails: Complete logging of every AI output for post-hoc review

Need enterprise AI compliance consulting? Book a free consultation — our team has extensive experience with AI adoption in financial services and healthcare.

Rollout Roadmap: From PoC to Production

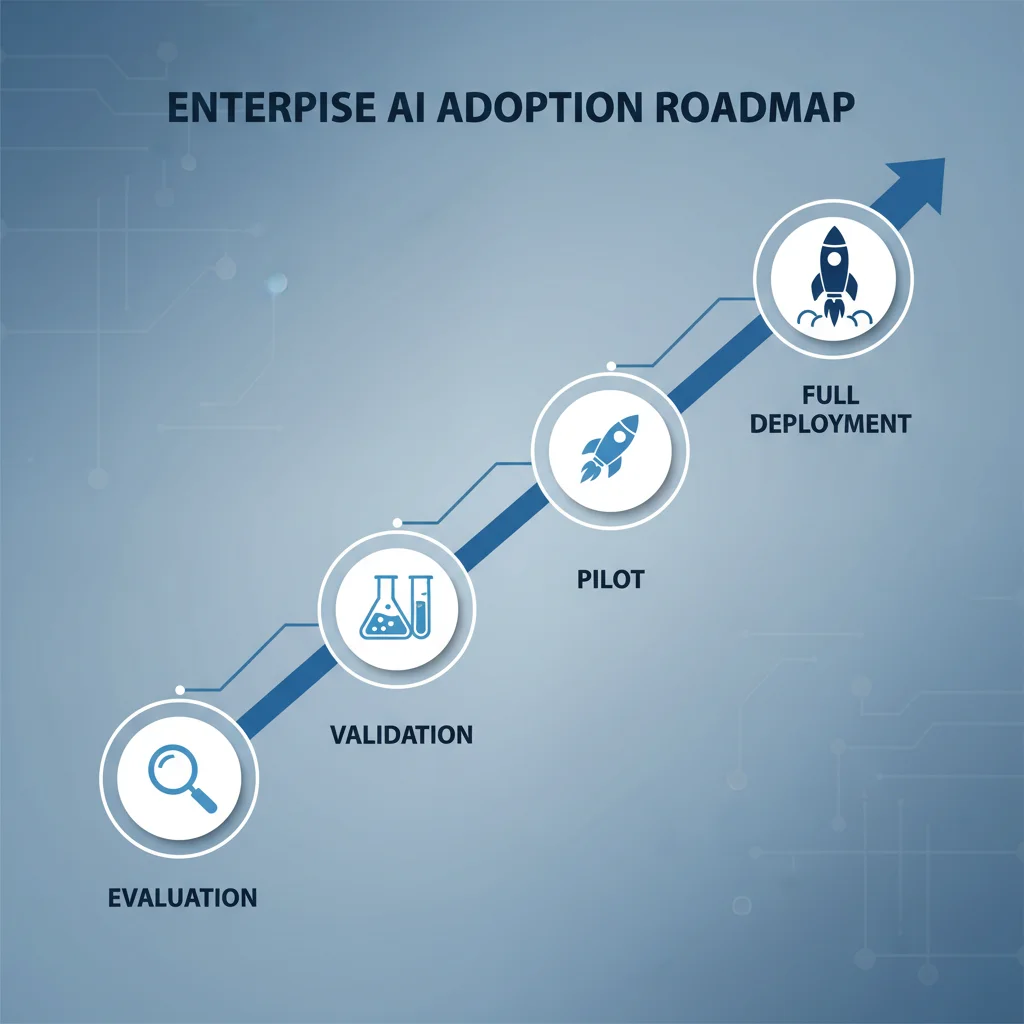

Enterprise AI adoption isn't "install the model and you're done." Based on our experience helping multiple organizations, this 4-phase roadmap significantly reduces failure risk:

Phase 1: Evaluation (2-3 Weeks)

Goal: Determine if Gemma 4 fits your use case

- Define 1-2 target use cases clearly (don't spread too thin)

- Collect real data samples for those scenarios (at least 100-200 examples)

- Run quick tests via Vertex AI API to evaluate model performance

- Compare output quality across model variants (E4B vs 26B MoE vs 31B)

- Produce an evaluation report covering accuracy, latency, and cost estimates

Deliverable: Feasibility report + model selection recommendation

Phase 2: Validation (3-4 Weeks)

Goal: Validate the end-to-end pipeline with real data

- Build a complete RAG pipeline (if integrating with internal knowledge bases)

- Run batch testing with real data, measuring accuracy and edge cases

- Conduct security and compliance review

- Evaluate whether fine-tuning is needed (RAG + prompt engineering suffices for most cases)

- Run preliminary cost modeling based on actual token usage

Deliverable: Technical feasibility report + security/compliance assessment + cost projection

Phase 3: Pilot (4-6 Weeks)

Goal: Validate production readiness in a controlled environment

- Deploy to production-grade architecture (but with limited scope)

- Open to 10-20% of internal users or a specific department

- Monitor key metrics: response quality, latency, error rate, user satisfaction

- Collect user feedback, iteratively improve prompts and system design

- Finalize deployment decision (Vertex AI vs self-hosted)

Deliverable: Pilot report + final deployment architecture + go-live plan

Phase 4: Full Deployment (2-4 Weeks)

Goal: Go live and establish continuous improvement

- Deploy to production per final architecture

- Configure monitoring alerts (latency > threshold, error rate > threshold)

- Establish on-call rotation and incident response procedures

- Define model update strategy (testing and upgrade process when new versions release)

- Schedule regular cost and performance reviews for ongoing optimization

Deliverable: Go-live documentation + operations runbook + continuous improvement plan

The entire process from evaluation to full deployment takes a conservative 3-4 months, potentially 5-6 months for complex scenarios. The key isn't speed — it's having clear go/no-go decision points at each stage.

Want to accelerate your AI adoption? Let's talk — we can customize the rollout roadmap based on your industry and use cases.

Frequently Asked Questions

Can Gemma 4 handle languages other than English? How's the quality?

Yes. Gemma 4's training data includes substantial multilingual content. The 31B and 26B MoE variants deliver near-commercial-API quality across major languages including Chinese, Japanese, Korean, German, French, and Spanish. E4B's multilingual capability is slightly weaker but still sufficient for customer service conversations. For language-specific use cases, fine-tuning with domain data can improve quality by 10-20%.

How much budget does enterprise Gemma 4 adoption require?

It depends on scale. During the PoC phase with Vertex AI, monthly costs typically stay under $50. For production deployment on self-hosted GPU (single RTX 5090 workstation), expect ~$5,000 upfront with ~$400-700/month in operations. For Vertex AI cloud deployment, there's no upfront cost, with monthly expenses ranging from $100-1,000 depending on usage volume.

Do we need to train our own model?

Most enterprise scenarios don't require it. Gemma 4's pre-trained variants paired with RAG (Retrieval-Augmented Generation) and prompt engineering typically cover 80-90% of requirements. Fine-tuning is only worth considering for highly specialized domain knowledge (specific legal codes, medical terminology). For fine-tuning details, see the Gemma 4 Fine-Tuning Guide.

How does Gemma 4 compare to commercial APIs (GPT-4o, Claude, Gemini)?

The 31B Dense variant approaches or exceeds some commercial APIs on most benchmarks. But commercial APIs have advantages in larger model scales, more comprehensive safety filtering, and zero operational overhead. If your core requirements are data sovereignty and cost control, Gemma 4 is the better choice. If you want peak quality and don't mind data leaving your premises, commercial APIs still have their place.

Conclusion: From "Should We Do This?" to "How Do We Do This?"

In 2026, the enterprise AI question has shifted from "should we use AI?" to "how and with what?" Gemma 4's Apache 2.0 license, multi-size model family, and near-commercial-grade performance have dramatically lowered the barrier to building enterprise AI in-house.

The most important takeaway: don't try to do everything at once. Start with one concrete use case, complete the 4-phase validation process, confirm business value, then expand. I've seen too many organizations try to "AI-ify everything" from day one, only to end up doing nothing well.

Ready to start your Gemma 4 adoption journey? Begin with the Gemma 4 Complete Guide to build foundational understanding, then book a free consultation so we can plan the optimal rollout together.

Need Professional Cloud Advice?

Whether you're evaluating cloud platforms, optimizing existing architecture, or looking for cost-saving solutions, we can help

Book Free ConsultationRelated Articles

Gemma 4 API Tutorial: Vertex AI and Google AI Studio Integration Guide

Complete 2026 Gemma 4 API integration tutorial: Google AI Studio for free quick start vs Vertex AI for enterprise deployment. Includes Python code examples, multimodal input, Function Calling, system prompts, and API pricing optimization.

AI Dev ToolsGemma 4 Complete Guide: The Most Powerful Open Source Model of 2026

Google's Gemma 4 open-source model family in 2026 — Apache 2.0 licensed, four sizes (E2B to 31B), 256K context window, multimodal support. Full analysis of architecture, deployment, fine-tuning, API integration, and enterprise adoption strategies.

AI Dev ToolsGemma 4 vs Llama 4 vs Qwen 3.5: The 2026 Open Source Model Showdown

Complete 2026 comparison of three open-source model giants — Gemma 4, Llama 4, and Qwen 3.5: benchmarks, inference speed, licensing (Apache 2.0 vs Llama License), Chinese language capability, hardware requirements, and a selection decision guide.