Claude Code Source Leak Explained: The Biggest AI Security Blunder of 2026

Claude Code Source Leak Explained: The Biggest AI Security Blunder of 2026

An AI Safety Company That Couldn't Protect Its Own Code

On March 31, 2026, Anthropic made a multibillion-dollar mistake.

They shipped Claude Code's complete source code — 512,000 lines of TypeScript containing unreleased features, internal codenames, and anti-competitive mechanisms — inside a public npm package. No hackers. No insider leak. Just a packaging error during release.

To make it worse, this was their second major security incident in a single week. Five days earlier, Fortune had reported that Anthropic left nearly 3,000 internal documents in an unsecured database.

How does a company valued at over $60 billion, whose entire brand revolves around AI safety, make such a basic operational mistake? This article walks through the full story and its implications for the AI development tools industry.

Want to safely adopt AI dev tools for your team? Book a free AI consultation — CloudInsight's experts will help you evaluate the best approach.

TL;DR

On March 31, 2026, Anthropic's Claude Code v2.1.88 npm release included a 59.8 MB source map file pointing to the complete source code. Within hours, 512,000 lines of TypeScript were mirrored across GitHub. A Korean developer completed a clean-room Python rewrite (claw-code) that hit 50K GitHub stars in just 2 hours — the fastest in GitHub history.

Full Timeline: From First Leak to Total Exposure

Answer-First: This wasn't Claude Code's first source map leak. The same issue occurred in February 2025, but Anthropic never fully addressed the root cause. In March 2026, the same problem exploded at a much larger scale.

Warning Signs That Were Ignored

| Date | Event |

|---|---|

| February 2025 | First Claude Code source leak via leftover source maps |

| March 10, 2026 | Internal memo documents 250,000 wasted API calls per day |

| March 11, 2026 | Bug report filed for Bun generating source maps in production mode |

| March 21, 2026 | Anthropic sends legal threat to open-source alternative OpenCode |

The source map bug was reported on March 11. The problematic release shipped 20 days later, unfixed.

The Incident

| Time (UTC) | Event |

|---|---|

| Mar 31, 00:21 | Claude Code v2.1.88 pushed to npm with 59.8 MB source map |

| Mar 31, ~08:23 | Security researcher Chaofan Shou publicly discloses on X/Twitter |

| Within hours | 512K lines of TypeScript mirrored to multiple GitHub repos |

| Before sunrise | Korean developer Sigrid Jin publishes claw-code (Python clean-room rewrite) |

| ~2 hours later | claw-code hits 50,000 GitHub stars |

| Mar 31, 03:29 | Anthropic removes the problematic version |

| Afterward | Anthropic issues DMCA takedown notices against GitHub mirrors |

Eight hours between the leak and discovery. A few more hours until complete mirroring. On the internet, 8 hours is an eternity.

What Was Actually Leaked? Six Key Discoveries

Answer-First: The leaked code revealed far more than just implementation details. Hidden features, internal codenames, and controversial mechanisms were all exposed. Here are the six most significant discoveries.

Discovery 1: KAIROS Autonomous Guardian Mode

The "KAIROS" feature flag appeared over 150 times in the codebase. KAIROS (Greek for "the opportune moment") represents an unreleased autonomous background agent mode containing:

/dreammemory distillation skill- GitHub webhook subscriptions

- Background daemon workers

- Five-minute cron refresh cycles

This is essentially the prototype for a 24/7 AI development assistant.

Discovery 2: Undercover Mode

The undercover.ts file revealed a mechanism that instructs Claude Code to avoid mentioning internal codenames (like "Capybara" and "Tengu"), Slack channels, or hints that code was AI-generated — when operating in external open-source repositories.

Most concerning: there was no hard off-switch.

Discovery 3: Anti-Distillation Mechanisms

- Fake Tools Injection: The

tengu_anti_distill_fake_tool_injectionflag injected fake tool definitions into API traffic to poison training data extraction attempts - Connector-Text Summarization: Buffered and cryptographically signed assistant responses to hide full reasoning chains

Anthropic built a system specifically to prevent competitors from learning from their model's behavior via API traffic.

Discovery 4: Frustration Detection via Regex

An LLM company using regular expressions to detect user frustration. The code contained regex patterns matching phrases like "wtf", "shit", and "this sucks." The irony of a language model company choosing regex for sentiment analysis was not lost on the community.

Discovery 5: Native Client Attestation

API requests included a cch=00000 placeholder, replaced at Bun's Zig-level HTTP stack with computed hashes. This is essentially a DRM-like verification mechanism to ensure requests originate from the genuine Claude Code binary — effectively blocking third-party clients.

Discovery 6: Internal Model Codenames

| Codename | Corresponding Model |

|---|---|

| Capybara | Claude 4.6 variant |

| Fennec | Opus 4.6 |

| Numbat | Unreleased, still in testing |

Plus 44 feature flags, 6+ remote killswitches, and an April Fool's Tamagotchi easter egg with 18 species and RPG stats.

Concerned about AI dev tool security? Talk to CloudInsight's security experts for a risk assessment.

Technical Root Cause: A Source Map Disaster

Answer-First: The root cause was Bun's bundler generating source map files in production mode, combined with insufficient CI/CD checks to catch the anomaly before publication.

What Happened Specifically?

Claude Code v2.1.88's npm package contained a .map file pointing to a zip archive hosted on Anthropic's Cloudflare R2 storage bucket. Anyone could:

- Install the npm package

- Find the source map file

- Download the corresponding zip

- Extract the complete TypeScript source code

No special permissions or hacking skills required.

Why Wasn't It Caught?

A reasonable CI/CD pipeline should include:

- Post-build artifact size checks (a 59.8 MB map file is obviously anomalous)

- Sensitive file scanning (

.mapfiles shouldn't appear in production builds) - Pre-publish human review

None of these existed or functioned properly. And the same issue had already occurred in February 2025.

claw-code: The Fastest GitHub Repo to Hit 50K Stars

Answer-First: Korean developer Sigrid Jin completed a clean-room Python rewrite within hours of the leak, creating the claw-code project. It hit 50K GitHub stars in approximately 2 hours — the fastest growth in GitHub history.

Project Stats

| Metric | Data |

|---|---|

| Repository | instructkr/claw-code |

| Created | March 31, 2026 |

| Stars | ~60,205 |

| Forks | ~61,899 |

| Primary Language | Rust (originally Python, now rewriting) |

| License | None |

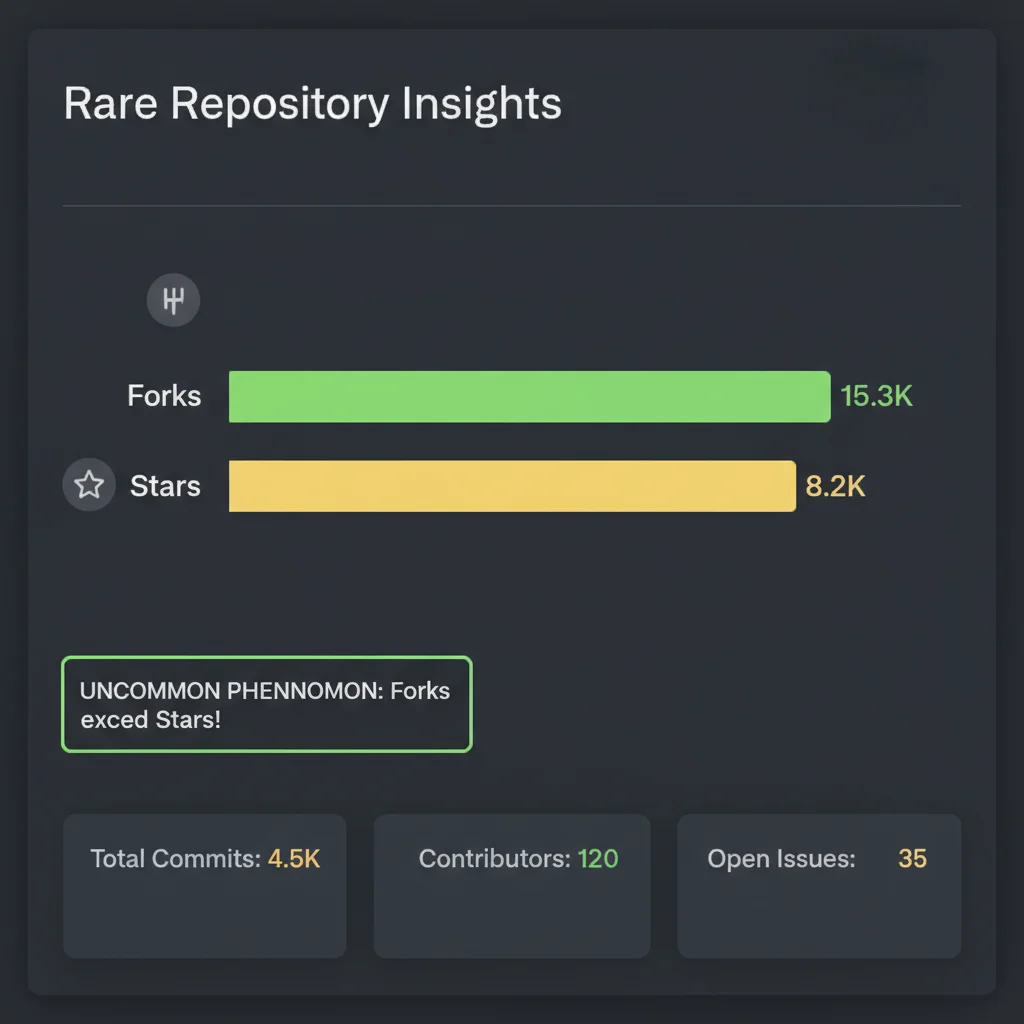

More Forks Than Stars — A GitHub First

Here's the most fascinating data point: claw-code has more Forks (~61,899) than Stars (~60,205).

On GitHub, this is extraordinarily rare. Popular projects typically have a Star/Fork ratio of 5:1 to 10:1. For every 5-10 people who star a repo, only 1 forks it.

claw-code is the complete opposite. More forks than stars means:

- Panic-driven backups: People's first instinct wasn't to star — it was to fork, fearing DMCA takedowns at any moment

- Utility over appreciation: Stars are social ("I like this"), forks are practical ("I need this"). Fork > Star means people cared more about securing the code than expressing approval

- Time pressure: Under DMCA threat, developers were racing against the clock. Fork is the fastest backup method

This perfectly illustrates the mindset of "rescue open-sourcing" — people weren't admiring a project, they were salvaging knowledge that could vanish at any moment.

Five Industry Impacts

Impact 1: npm Security Publishing Under Scrutiny

This incident highlights an ongoing risk in npm publishing: source map file management. Teams shipping npm packages should:

- Add artifact size and file type checks to CI/CD pipelines

- Explicitly add

.mapfiles to.npmignore - Implement automated sensitive file scanning before publish

Impact 2: The AI Agent Architecture "Textbook" Is Now Public

The industry saw a complete commercial AI coding agent's internal architecture for the first time — prompt engineering, multi-agent coordination, security mechanisms, and feature flag management.

Impact 3: Accelerated Open-Source Alternatives

claw-code's emergence, combined with Anthropic's legal threats against OpenCode, made the case for open-source AI coding agents more urgent than ever.

Impact 4: Product Roadmap Exposed

44 feature flags essentially revealed Anthropic's product roadmap. Competitors now know about KAIROS, anti-distillation measures, and DRM-like client attestation.

Impact 5: Trust Crisis for AI Safety Companies

Anthropic's core selling point — "we take safety more seriously" — became hard to maintain after two major security incidents in one week.

Worried about your AI tool supply chain security? Book a free security assessment with CloudInsight.

FAQ

Was the Claude Code source leak a hack?

No. It was a human error in Anthropic's npm packaging process. A source map file was accidentally included, allowing anyone to recover the complete TypeScript source code. No external intrusion or insider leak was involved.

What's the relationship between claw-code and the leaked source?

claw-code is a clean-room rewrite by Korean developer Sigrid Jin. She didn't copy Anthropic's source directly but referenced the architecture and reimplemented it from scratch in Python (later Rust). This approach doesn't constitute copyright infringement and can't be taken down via DMCA.

Was any user data exposed?

According to Anthropic's official statement, no customer data or credentials were involved. The leak was limited to Claude Code's own source code and internal configuration.

Why does claw-code have more forks than stars?

Developers feared DMCA takedowns and prioritized forking (backing up the code) over starring (expressing appreciation). This "rescue backup" behavior created the extremely rare Fork > Star phenomenon on GitHub.

Conclusion: Safety Is a System, Not a Slogan

The biggest lesson isn't that source maps are dangerous — everyone knows that.

The real lesson: security is a systems engineering discipline, not a brand position. You can invest hundreds of millions in AI alignment research, but if your CI/CD pipeline can't catch a 59.8 MB anomalous file, your safety promises are just marketing.

For those of us in cloud and AI consulting, this is a powerful reminder: when choosing AI tools, look beyond features and model capabilities. Evaluate whether the company's software engineering practices are mature enough to trust.

After all, if a company can't protect its own source code, are you really comfortable letting it handle your business secrets?

Want to adopt AI dev tools safely and reliably? Book a free AI consultation — CloudInsight handles everything from architecture to security.

Need Professional Cloud Advice?

Whether you're evaluating cloud platforms, optimizing existing architecture, or looking for cost-saving solutions, we can help

Book Free ConsultationRelated Articles

What Is Claude Buddy? The 2026 April Fools Easter Egg Exposed by a Source Code Leak

During Anthropic's 2026 Claude Code source leak, developers discovered a hidden April Fools easter egg — Claude Buddy, a terminal Tamagotchi with 18 species, RPG stats, and a 1% shiny rate. Here's everything we know about the cutest secret in AI dev tools.

AI Dev ToolsGemma 4 Complete Guide: The Most Powerful Open Source Model of 2026

Google's Gemma 4 open-source model family in 2026 — Apache 2.0 licensed, four sizes (E2B to 31B), 256K context window, multimodal support. Full analysis of architecture, deployment, fine-tuning, API integration, and enterprise adoption strategies.

AI Dev ToolsGemma 4 API Tutorial: Vertex AI and Google AI Studio Integration Guide

Complete 2026 Gemma 4 API integration tutorial: Google AI Studio for free quick start vs Vertex AI for enterprise deployment. Includes Python code examples, multimodal input, Function Calling, system prompts, and API pricing optimization.